Creating Hime — A VSCode Extension for Chatting with Multiple Generative AI Agents

I built a VSCode extension called Hime (HikariMessage) that lets you chat with multiple AI providers.

It follows a BYOK (Bring Your Own Key) model — you just need an API key from each provider you want to use.

What is Hime?

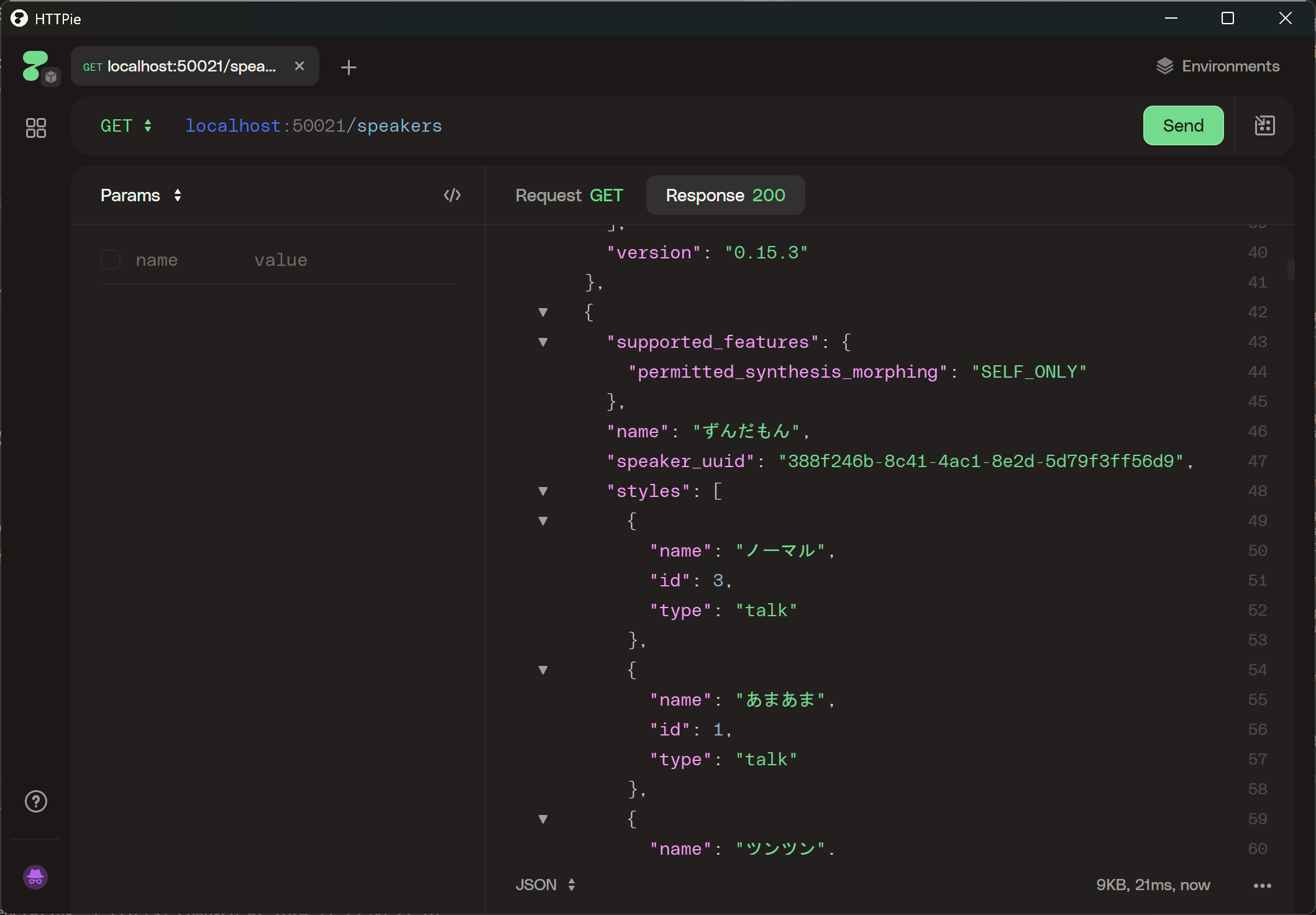

Hime is a generative AI chat extension that lives in the VSCode sidebar. It supports Anthropic, OpenAI, Azure OpenAI, OpenRouter, and Ollama, and lets you switch between providers easily via a dropdown menu.

Key Features

Multiple AI Provider Support

The following providers are supported:

- Anthropic (Claude)

- OpenAI

- Azure OpenAI

- OpenRouter

- Ollama

Streaming Responses

Responses are displayed in real time, so even long answers feel snappy.

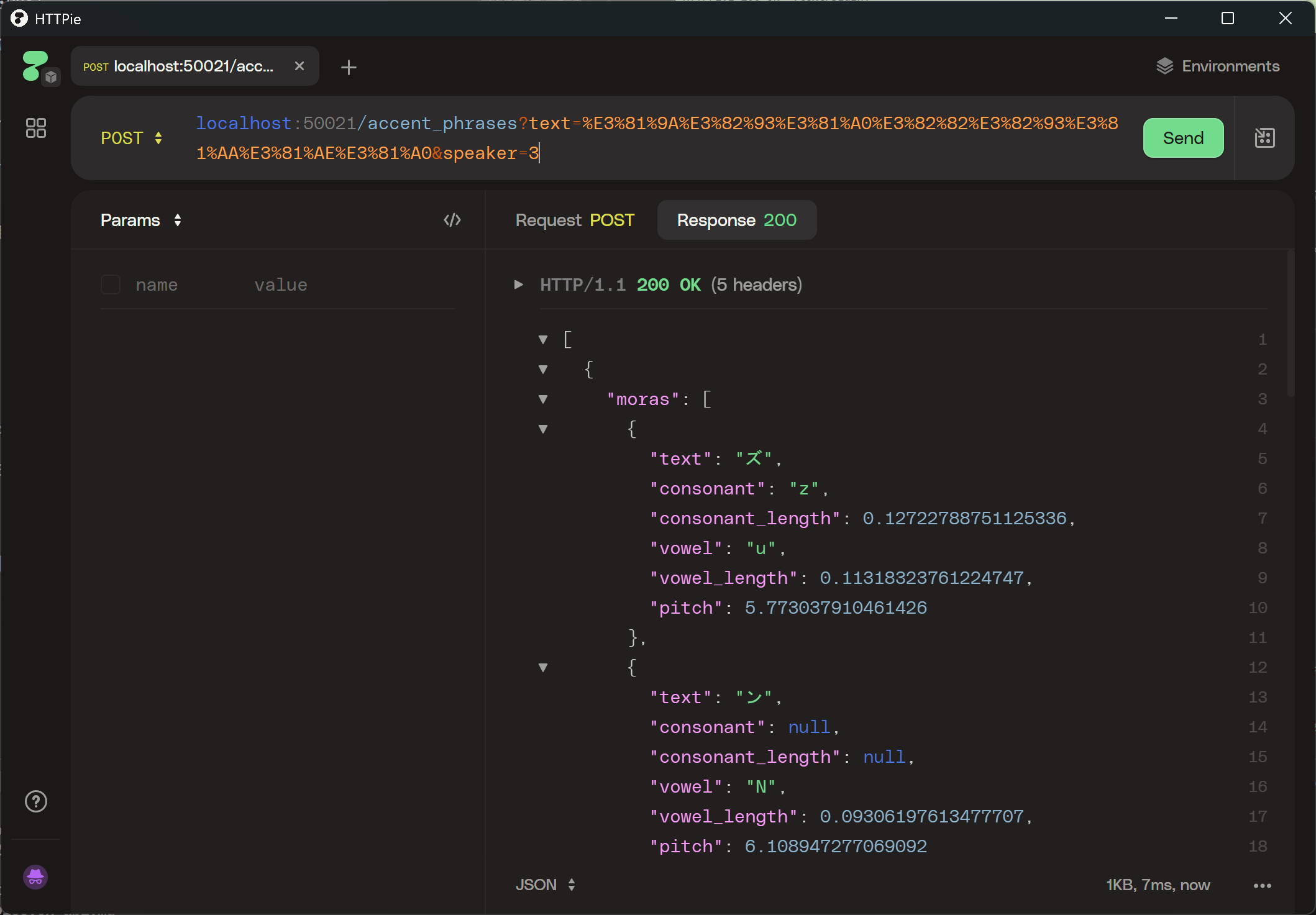

MCP

You can enable MCP by adding a JSON configuration in the settings like this:

Example

{

"filesystem": {

"command": "npx",

"args": ["-y", "@modelcontextprotocol/server-filesystem", "C:\\Users"]

}

}

Rich UI

- Markdown rendering

- Syntax highlighting for code blocks

- Copy button for code blocks

- MCP tool output display

Persistent Chat History

Conversation history is saved as JSON files under ~/.hime/chats/. You can pick up right where you left off even after restarting VSCode.

Automatic System Prompt

Workspace information, OS details, and the context of your currently open editor are automatically injected into the system prompt. Just say "fix this file" and the AI already knows what you're looking at.

Setup

Requires Node.js 20+ and VSCode 1.96+.

git clone https://github.com/Himeyama/hime

cd hime

npm install

npm run watch # Development: watches both Extension Host and Webview simultaneously

Then press F5 in VSCode to launch the extension host. API keys can be entered via the settings panel in the sidebar and are stored encrypted using VSCode's SecretStorage.

Wrapping Up

Hime's strength is that you can interact with AI without leaving your editor — and even delegate tool execution via MCP. Give it a try!

Repository: https://github.com/Himeyama/hime